Author: G. Aydin

The AI in sports market reached 8.93 billion USD in 2024 and is projected to hit 60.78 billion by 2034. AI-powered platforms are spreading rapidly into youth sport, some organisations now offer video analysis and performance tracking to children as young as 8–9, at around 300 USD per year. But behind this growth, a crisis is building: children's biometric data, images and digital identities are being collected in environments where basic safeguards do not exist.

Using our SF4Sport methodology, we focused on this space together with AI Nexus Ireland. The work involved three interconnected layers:

Environmental scanning: identifying signals of change, emerging trends and potential wild cards across the AI-child protection-grassroots sport landscape.

Policy alignment: mapping these findings against EU policy frameworks including the EU AI Act (Regulation 2024/1689), GDPR, BIK+ Strategy, the Digital Services Act, Erasmus+ 2026 and the EU Sport Work Plan 2024–2027.

Evidence synthesis: reviewing 25+ peer-reviewed academic sources published between 2019 and 2026, alongside institutional reports from UNICEF, the OECD, the European Parliament, Childlight and others.

The picture that emerged demands urgent action. Here are 5 signals, 3 trends and 2 wild cards likely to shape the future of AI, data protection and child safety in European grassroots sport.

Signal 1 - 41% of Grassroots Clubs Have No Data Protection Policy

The Irish Data Protection Commission surveyed over 100 sports clubs across four major sports in December 2024. The findings reveal a sector fundamentally unprepared for the AI age: 41% have no written data protection policy. 56% have no data retention schedule. Over half lack procedures for subject access requests. A third of staff and volunteers carry club data on personal devices. And 39% collect performance data, which the DPC noted likely qualifies as health data under GDPR, requiring enhanced protection.

These are not edge cases. These are mainstream grassroots clubs, the kind that work with children every day. The gap between what GDPR requires and what clubs actually do is not a minor compliance issue. It is a child safety gap.

When AI tools are layered onto this foundation, performance tracking apps, video analysis platforms, wearable sensors, the data exposure multiplies. Children's biometric information enters systems that were never designed to protect it.

The baseline is broken. And AI is being built on top of it.

Signal 2 - AI-Generated Child Abuse Material Surged 26,000%

The Internet Watch Foundation reported that AI-generated child sexual abuse videos rose from 13 in 2024 to 3,440 in 2025, a 26,362% increase. UNICEF's 2026 study across 11 countries found at least 1.2 million children were victims of deepfake sexual content in a single year. The European Parliament estimates that 98% of all deepfakes are pornographic, and 17% involve a child.

This is not an abstract risk for grassroots sport. Children's photos and videos from training sessions and matches are routinely shared on social media, club websites and messaging groups. "Nudification" applications can generate explicit content from a single photograph within seconds. Every image shared without adequate safeguards is potential source material.

Childlight's 2025 report documented that technology-facilitated child abuse cases in the US rose from 4,700 in 2023 to 67,000 in 2024, a 1,325% increase in a single year. The UK's National Police Chiefs' Council reported that deepfake abuse increased 1,780% between 2019 and 2024.

Only 37% of parents are aware their children use AI tools. One in four parents wrongly believes their child does not use AI at all.

The explosion of AI-generated abuse material and the routine sharing of children's images in sport creates a convergence that grassroots clubs are not equipped to address.

Signal 3 - AI Talent Systems Are Biased by Design

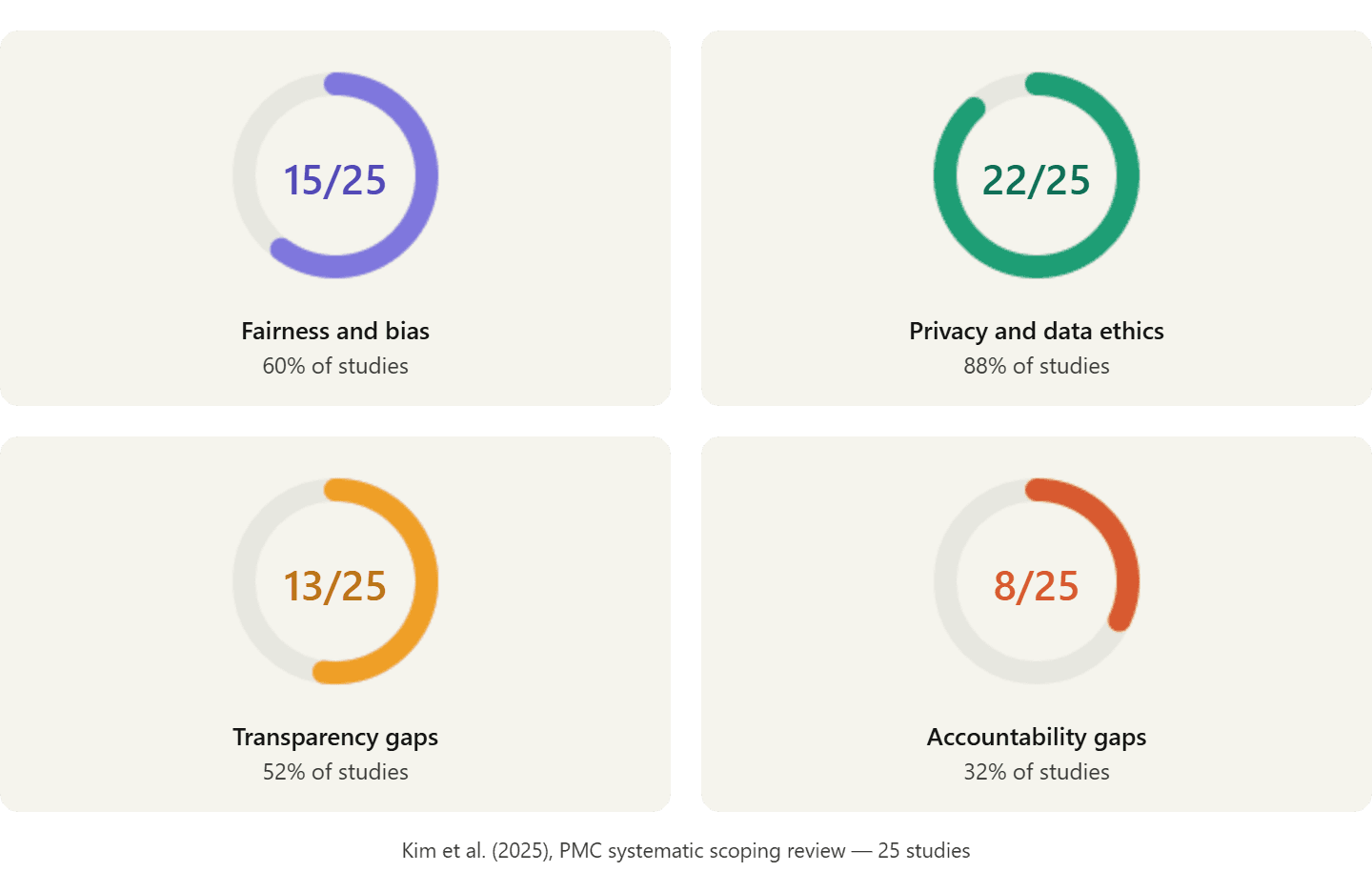

A systematic scoping review published in the Journal of Sport and Health Science (Kim et al., 2025) examined 25 studies on the ethical implications of AI in sport. The findings are sobering: 15 studies identified fairness and bias concerns, 22 flagged privacy and data ethics issues, 13 highlighted a lack of transparency and 8 found accountability gaps.

The structural problem runs deep. Training data for AI systems in sport is overwhelmingly drawn from elite male athletes. Young girls, athletes from ethnic minorities, disabled athletes and those from underrepresented populations are systematically underrepresented in the datasets that train these algorithms.

A separate narrative review on ethical bias in AI-driven injury prediction (MDPI, November 2025) examined 24 studies and identified five distinct ethical concerns: privacy and data protection, algorithmic fairness, informed consent, athlete autonomy and long-term data governance. The review found that sampling bias in sport science research is being reproduced and amplified through AI systems.

This means that at grassroots level, where AI talent identification tools are increasingly deployed, young girls, children from ethnic minorities and disabled youth risk being algorithmically filtered out before they ever get a fair chance. A child's sporting future should not be determined by a biased algorithm.

The bias is not a bug. It is baked into the data.

Signal 4 - Youth Sport Costs Rose 46% - AI May Widen the Gap

The Aspen Institute's State of Play 2025 report documented that youth sport costs have risen 46% since 2019. In the US alone, youth sport is a 54 billion USD sector. The participation gap between high-income and low-income families has reached 20.2 percentage points.

As AI tools are layered onto existing cost structures, performance tracking subscriptions at 300 USD per year, wearable devices, data platforms, video analysis services, the digital divide risks deepening further. Children from families who can afford AI-enhanced coaching gain a measurable advantage. Children who cannot are left further behind.

The European Commission's SHARE 2.0 report confirmed that approximately two-thirds of European grassroots sport clubs report financial distress. These clubs cannot evaluate, adopt or govern AI tools responsibly, yet their athletes are increasingly expected to compete against those who benefit from AI-enhanced development.

The children who need sport most may be priced out entirely. AI risks turning a participation gap into a participation cliff.

Signal 5 - AI Performance Pressure Is Fuelling Youth Burnout

Burnout prevalence among athletes is estimated at 5–12%, according to meta-analyses by Gustafsson et al. A systematic review covering 54 studies found significant links between burnout and depression, with small-to-moderate effect sizes for anxiety.

Research by Fiedler et al. (2024) on 53 competitive athletes aged 12–27 found that even social media use, particularly TikTok, negatively affected sleep quality, recovery and stress levels. The mechanisms through which AI performance platforms affect young athletes' mental health are likely similar but more intense: continuous monitoring, algorithmic comparison, quantified ranking.

AI-powered continuous performance tracking creates what researchers describe as a "digital panopticon" effect. Every movement is tracked. Every metric is recorded. Every training session generates data that can be compared, ranked and shared. For developing adolescents whose self-image and self-regulation are still forming, this environment can fuel anxiety, burnout and ultimately dropout from sport.

A 2025 study in the Journal of Amateur Sport found that over 60% of more than 1,000 parents surveyed had witnessed inappropriate behaviour from other adults at youth sport events. When AI provides "objective" performance data, it amplifies parental pressure by giving it a veneer of scientific authority.

The joy of sport risks being replaced by algorithmic anxiety. And the children cannot opt out.

Trend 1 - Biometric Surveillance Is Reaching Youth Sport

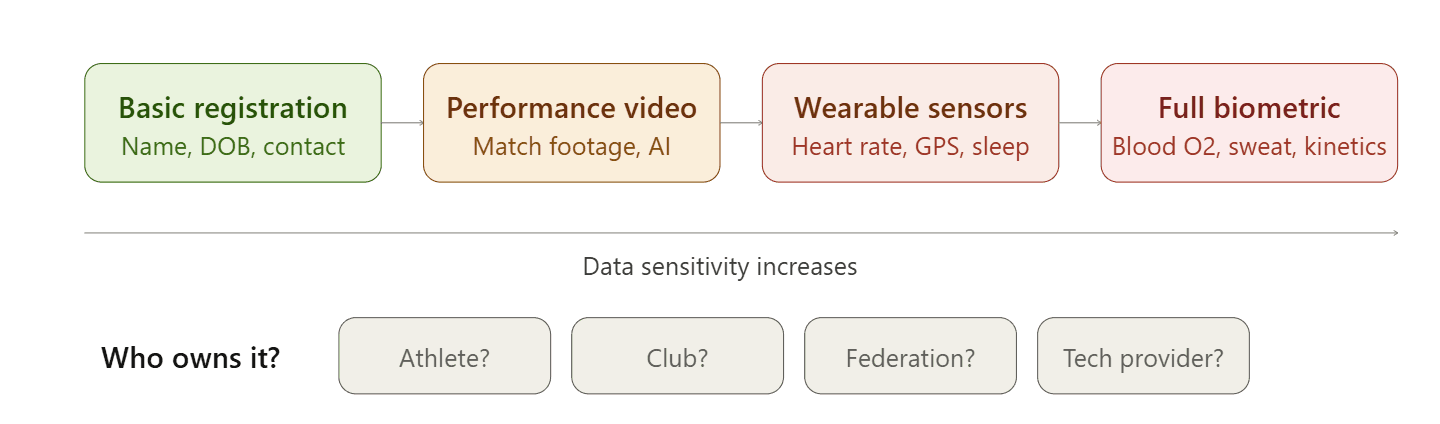

GPS vests, heart rate sensors, inertial measurement units and smart watches are no longer confined to elite sport. These devices collect heart rate variability, sleep metrics, sweat rates, blood oxygen levels and hundreds of kinesthetic data points. Computer vision technology, the fastest-growing segment in sport AI, with a CAGR of 30.3%, enables real-time posture estimation and behavioural analysis from video footage.

Under GDPR Article 9(1), biometric data is classified as special category personal data and cannot be processed without explicit consent or a legal exemption. For children under 16, parental consent is mandatory.

Yet grassroots clubs lack the capacity to comply. The DPC survey found that 56% of clubs collecting performance data were unaware that it likely qualifies as health data. Data ownership remains fundamentally unclear, does the data belong to the child, the parent, the club, the federation or the technology provider? A study by Kwon (2025) in Frontiers in Sports and Active Living proposed co-ownership models under GDPR and Korean PIPA, but no practical framework has been implemented.

The risk of children's biometric data being shared with commercial third parties, including betting companies and video game developers, is not hypothetical. A Dutch club was fined for inappropriate data sharing with a wearable device provider. The commercial incentive to monetise children's performance data will only grow.

The sensors are arriving. The safeguards are not.

Trend 2 - The Regulatory Framework Doesn't Fit Grassroots Sport

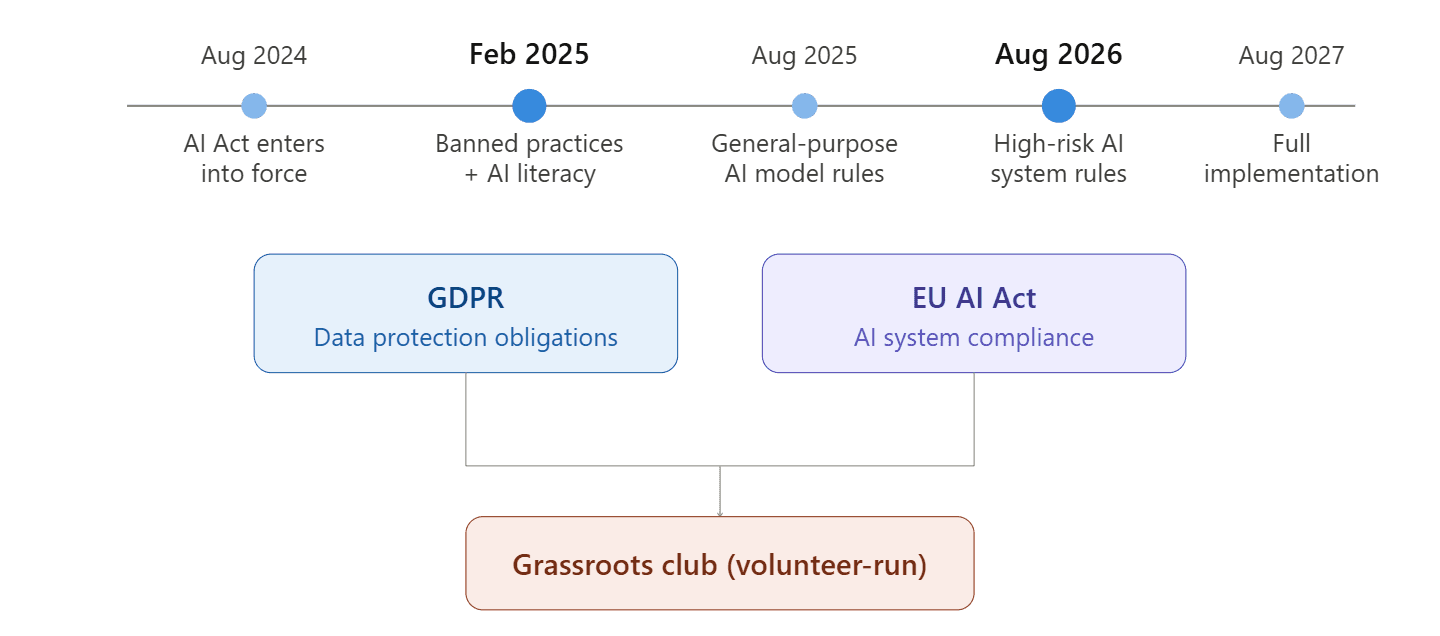

The EU AI Act entered into force in August 2024, with banned practices and AI literacy obligations effective from February 2025. General-purpose AI model rules apply from August 2025. High-risk AI system rules take effect in August 2026, with full implementation by August 2027.

Combined with GDPR, this creates what can be described as a "dual compliance burden" for grassroots clubs. The same data is subject to parallel but distinct obligations under both frameworks, data protection requirements under GDPR and AI system compliance requirements under the AI Act. For volunteer-run organisations with limited budgets and no legal expertise, navigating both simultaneously is effectively impossible.

Yet there is no sector-specific guidance for sport. No unified governing body coordinates AI governance across European sport. No tailored support exists for grassroots clubs navigating children's consent requirements, biometric data rules and algorithmic transparency obligations. The SHARE 2.0 report recommended that sport organisations actively shape AI developments to serve values like equity, inclusion and social responsibility, but offered no practical roadmap for grassroots implementation.

The regulation exists. The translation to grassroots reality does not.

Trend 3 - AI-Enabled Grooming and Digital Threats Are Escalating

The UN's January 2026 Joint Statement on AI and Children's Rights declared that AI risks to children are outpacing society's capacity to respond. Childlight's 2025 report documented that technology-facilitated child abuse cases in the US increased 14-fold in a single year.

The UN warns that perpetrators are using AI to analyse a child's online behaviour, emotional state and interests in order to personalise grooming strategies. Grassroots sport club platforms, membership apps, social media groups, communication channels, create spaces where adults interact with children, and these spaces carry exploitation risks that most clubs have not assessed.

The US Federal Trade Commission launched an investigation into AI companion chatbots in September 2025, citing concerns about their influence on vulnerable users, especially children. Children tend to trust interactions that appear human-like, even when they are machine-generated. In grassroots sport, "AI coach" or "AI assistant" applications could create the appearance of emotional connection while collecting data or shaping behaviour in ways that are invisible to parents and club administrators.

The OECD's 2025 transparency report found that half of the world's 50 largest digital services offered only vague descriptions or no information about their child sexual exploitation detection measures. 33 services did not even define "child" or "minor" in their policies.

The digital threats are evolving faster than the defences. Grassroots sport clubs are on the front line, and most do not know it.

The Gap - Child Protection Standards Exist in Physical Sport, But Not in Digital Grassroots

This is the most important finding of the entire analysis.

GDPR compliance tools exist. Child safeguarding protocols exist. Ethical AI frameworks exist. Sport-based violence prevention programmes have been identified as having the strongest effect size in an umbrella review covering 16 meta-analyses. Programmes like Coaching Boys Into Men produce effective results in physical sport contexts.

On one side: GDPR, EU AI Act, BIK+ Strategy, DSA, child safeguarding standards, ethical AI principles. On the other side: grassroots clubs with no data policy (41%), no retention schedules (56%), volunteers carrying children's data on personal devices (33%), and AI tools being adopted without any impact assessment.

The critical gap is this: none of these frameworks have been systematically adapted to the digital grassroots environment. Zero sport-based child data protection programmes exist for the AI age. The technology is being adopted faster than the safeguards are being developed.

The EU policy architecture is comprehensive. The grassroots implementation is absent.

The protection exists in theory. In practice, children in grassroots sport are unprotected in the AI age.

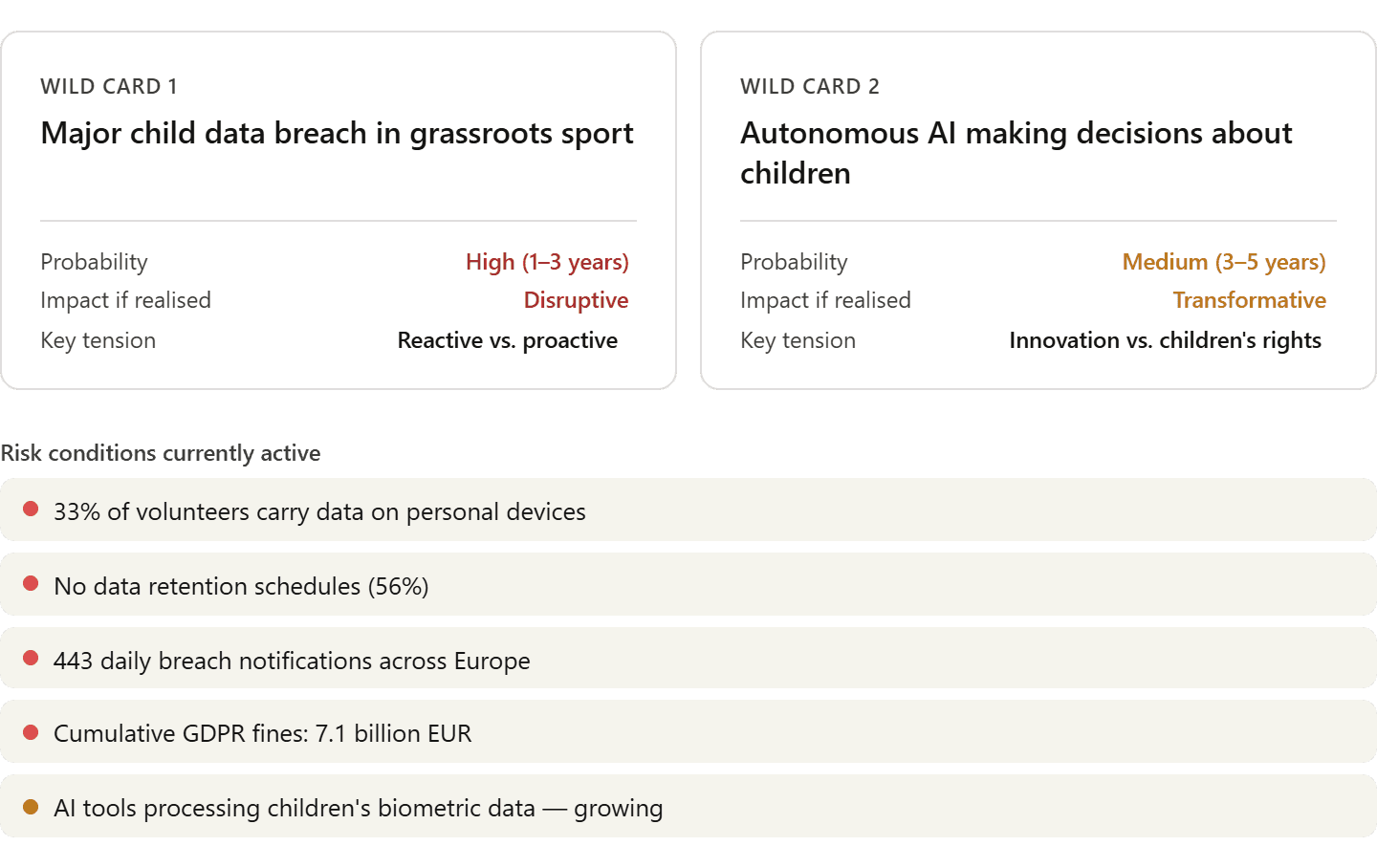

Wild Card 1 - What If a Major Child Data Breach Hits Grassroots Sport?

A wild card is a low-probability, high-impact event that could disrupt the entire landscape overnight.

The conditions are in place. 33% of club volunteers carry children's data on personal devices. 56% of clubs have no data retention schedules. AI tools are processing biometric information without adequate safeguards. Daily data breach notifications across Europe have risen to 443 per day. Cumulative GDPR fines have reached 7.1 billion EUR.

A single high-profile incident, a data breach exposing children's biometric data, a deepfake scandal involving images from a club's social media, a grooming case facilitated through a club's digital platform, could trigger regulatory pressure overnight, force emergency policy changes and fundamentally reshape how grassroots clubs operate.

The probability is high, within a 1–3 year window. The impact, if realised, would be disruptive. The key tension is between reactive and proactive approaches.

Organisations that have already built data protection policies, parental consent mechanisms and digital safeguarding protocols will be protected. Those that have not will face severe reputational, legal and operational exposure.

Wild Card 2 - What If Autonomous AI Starts Making Decisions About Children Without Oversight?

By 2030–2035, fully autonomous AI agents could manage club administration, talent identification and even training programmes, making decisions about children's sporting futures without meaningful human oversight.

The concept of a "digital sport twin", lifelong tracking of a child's performance, biometric and behavioural data from their earliest participation in sport, raises fundamental questions about the right to be forgotten, childhood autonomy and the commodification of young athletes. As AI systems become more capable, the risk of "child valuation markets", where young athletes are quantified, ranked and traded as data assets before they can meaningfully consent, becomes increasingly real.

The probability is medium, within a 3–5 year window. The impact would be transformative. The key tension is between innovation and children's rights.

The EU AI Act explicitly bans AI systems that exploit the vulnerabilities of children. But the boundary between "personalised development support" and "exploitation of vulnerability" will be tested repeatedly as autonomous AI enters grassroots sport.

Early-mover organisations that establish human-oversight mechanisms and child-centred AI principles now will define the standards for the next decade.

So What Now?

These signals and trends make one thing clear: child protection in AI-powered grassroots sport is no longer a future concern. It requires urgent action now.

Three priority areas emerge from the analysis:

Priority 1 - Immediate data protection and parental consent

Every grassroots club collecting children's data needs a written data protection policy, a data retention schedule, subject access request procedures and a BYOD policy governing personal devices. Parental consent mechanisms must be clear, informed and revocable. The DPC survey proves these basics are missing in the majority of clubs. Without this foundation, no AI tool can be deployed responsibly.

This aligns with GDPR Articles 8, 9, 25 and 35, the DPC's recommendations, and the Erasmus+ 2026 Programme Guide's safeguarding requirements.

Priority 2 - Child-centred AI design and mandatory impact assessment

Before any AI tool is deployed with young athletes, a Data Protection Impact Assessment must be conducted. Every AI tool should be evaluated through four lenses: data security, age-appropriateness, human oversight and transparency. Children's biometric data must not be used for profiling or commercial purposes under any circumstances.

This operationalises the EU AI Act's provisions on banned practices (exploitation of children's vulnerabilities), the BIK+ Strategy's three pillars and the DSA's guidelines on protection of minors.

Priority 3 - AI literacy training for the grassroots ecosystem

Coaches, volunteers, parents and young athletes themselves need accessible, multilingual AI literacy training covering GDPR rights, ethical AI use and digital safeguarding. The EU AI Act's literacy obligations, effective from February 2025, provide the legal foundation. The SHARE 2.0 report's recommendation to leverage digitally aware younger members provides the practical pathway.

This aligns with the EU AI Act's literacy requirements, the AI Continent Action Plan's skills agenda and the Erasmus+ 2026 horizontal priority on Digital Transformation.

EU policy alignment

Our analysis found strong alignment between these findings and seven active EU policy frameworks. The strongest connections run through GDPR (Articles 8, 9, 17, 25, 35 and Recital 38), the EU AI Act (Regulation 2024/1689, banned practices and high-risk provisions), the BIK+ Strategy (three pillars: safe digital experience, digital empowerment, active participation), the Digital Services Act (Article 28, protection of minors guidelines), the EU Sport Work Plan 2024–2027 (integrity and values, mental health), the Erasmus+ 2026 Programme Guide (horizontal priorities on Digital Transformation and Inclusion & Diversity) and TFEU Article 165(2) on protecting the physical and moral integrity of the youngest sportspeople.

The convergence of these frameworks in the 2026–2028 window creates a unique and urgent opportunity for action.

This article is a part of the SF4Sport Strategic Foresight Series by Sport Singularity, produced in partnership with AI Nexus Ireland. The analysis draws on 25+ peer-reviewed sources (2019–2026) and maps EU policy frameworks including the EU AI Act, GDPR, BIK+, DSA, Erasmus+ 2026 and the EU Sport Work Plan 2024–2027.

April 2026, Sport Singularity, SF4Sport