Author: G.Aydin, H.Ege

Around 1.5 to 1.8 billion children play online games worldwide. The esports industry is growing exponentially. But behind this growth, a crisis is deepening: gender-based violence (GBV) is moving from physical fields to digital arenas, and often intensifying there.

Using our SF4Sport methodology, we focused on this thematic area together with Association for Justice and Rights in Sports (SAHADAYIZ). The work involved three interconnected layers:

Horizon scanning: identifying signals of change, emerging trends and potential wild cards across the online gaming and esports landscape.

Policy alignment: mapping these findings against EU policy frameworks including the Digital Services Act, Erasmus+ Sport, BIK+ and the EU Directive 2024/1385 on combating violence against women.

Evidence synthesis: reviewing 22 peer-reviewed academic sources published between 2020 and 2026, alongside institutional reports from the ADL, UNICEF, EIGE, Eurostat and others.

The picture that emerged is both alarming and a call to action. Here are 5 signals, 3 trends and 2 wild cards likely to shape the future of safety in digital sport environments.

Signal 1 - Harassment Is Becoming "Normal" And the Numbers Prove It

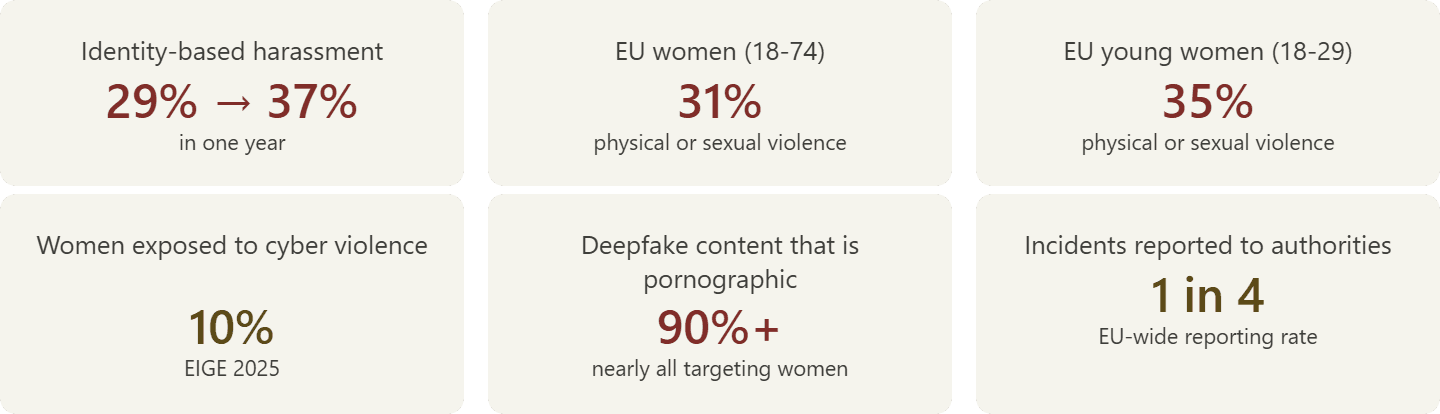

In the US, 76% of adult gamers, approximately 83 million people, experienced online harassment in the past six months, according to the Anti-Defamation League's 2024 report. Among young people aged 10-17, the rate is 75%, showing a year-on-year increase. Even more concerning is the rise in identity-based harassment. This form of targeted abuse, directed at players because of their gender, ethnicity, religion or sexual orientation, jumped from 29% to 37% in a single year.

A 2025 experimental study by ADL tested what happens when players use usernames that express religious or ethnic identity in games like Valorant and CS2. Harassment was detected in over half of the sessions. When identity was made visible, the rate of identity-based abuse reached 57%. Beyond these headline figures, a study of 432 gamers (Kowert et al., 2024) found that 82.3% had been directly exposed to toxic behaviour, 88.1% had witnessed harassment, and 31% admitted to engaging in harassment themselves. Among those harassed, 23% reported withdrawing from social interactions, 15% felt isolated, and one in ten experienced depressive or suicidal thoughts.

A separate study published in Frontiers in Psychology (Wells et al., 2025) surveyed 602 gamers and found that 15% of adults and 9% of teens aged 13-17 had been exposed to white supremacist ideologies on gaming platforms, with 30% of those encountering such content at least once a week.

Harassment has become a structural feature of the gaming experience.

Signal 2 - Women Gamers Are Forced to Disappear

Around 70% of women gamers hide their gender while playing online. One in five quits gaming altogether due to harassment. These are not isolated anecdotes, they are findings from peer-reviewed research and major survey data.

A 2024 study published in Games and Culture (Crothers, Scott-Brown & Cunningham) examined the experiences of women esports participants and found systematic patterns of gender-based harassment, social exclusion, financial inequality and gender-based expectations. Social exclusion, previously under-documented, emerged as a critical negative factor in women's esports experiences.

In the broader EU context, the numbers are equally stark. According to the 2024 EU Gender-Based Violence Survey conducted by Eurostat, FRA and EIGE across all 27 member states, 31% of women aged 18-74, approximately 50 million women, have experienced physical or sexual violence in adulthood. Among young women aged 18-29, this figure rises to 35%. Only one in four incidents is reported to authorities. EIGE's 2025 input to the EU Gender Equality Strategy found that 10% of women have experienced some form of cyber violence. More than 90% of deepfake content is pornographic in nature, with nearly all of it targeting women.

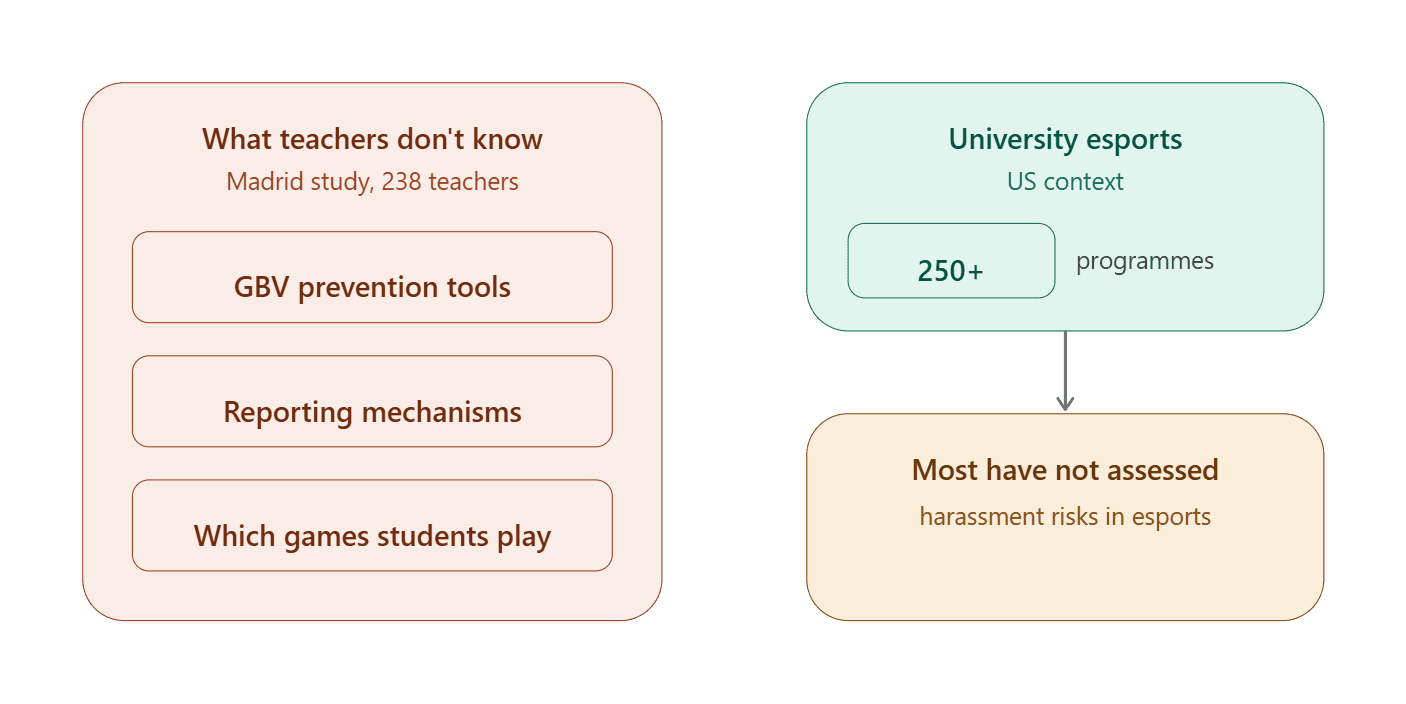

Over 250 universities in the US now run varsity esports programmes, yet according to Scientific American (2025), most have not assessed sexual harassment risks in the esports context. Universities are managing esports programmes reactively rather than proactively addressing foreseeable problems.

The evidence points to a clear conclusion: harassment is not pushing women to the margins of gaming, it is systematically erasing them from digital spaces altogether.

Signal 3 - Children Are Unprotected in Digital Spaces

According to UNICEF Innocenti's 2025 working paper on protecting children in online gaming, criminal and violent organisations are actively using gaming platforms as tools to socialise children and draw them into violent environments. In high-income countries, 85-90% of children play online games. In the UK, 89% of children aged 3-17 play online; in the US, 85% of teens aged 13-17 do so.

A nationally representative study of 5,005 US adolescents aged 13-17 (Hinduja & Patchin, 2024, published in New Media & Society) found that young people in VR and metaverse environments experience hate speech, bullying, sexual harassment and grooming. Girls were significantly more likely than boys to experience sexual harassment and grooming behaviours, and were disproportionately targeted because of their gender. Critically, the vast majority of young users rarely use platform safety features.

According to Ygam/Mumsnet's 2025 report, children now spend an average of 20.4 hours per week gaming, up from 16.8 hours in 2024. NSPCC data shows that one in four young people aged 11-18 have been contacted by strangers through Fortnite.

The esports sector has not yet developed child protection regulations comparable to those in traditional physical sport, there are no systematic equivalents to DBS checks, designated protection officers, or standardised safeguarding procedures.

Signal 4 - The Education System Is Unprepared

A 2025 study published in Social Sciences (García-Toledano et al.) surveyed 238 teachers in Madrid and found that the vast majority were unaware of GBV prevention tools, behavioural codes and reporting mechanisms for online gaming. Most teachers could not even identify which games their students play.

In the US, over 250 universities run esports programmes, with more than 900 institutions offering some form of esports activity. Yet most have not evaluated sexual harassment risks within the esports context under Title IX provisions. Scientific American (2025) highlighted that universities are managing esports programmes reactively, failing to get ahead of foreseeable problems.

This gap is not limited to formal education. The broader ecosystem of youth engagement with gaming, parents, community organisations, sports clubs, is equally unprepared for the scale and nature of risks that children face in digital gaming environments.

Signal 5 - Regulatory Frameworks Don't Fit the Gaming Sector

The EU Digital Services Act (DSA) came into full effect in February 2024, bringing content moderation, transparency and child protection obligations for online platforms. However, child protection guidelines published as recently as July 2025 largely focus on social media, with limited provisions specific to gaming platforms.

The gaming industry experienced significant layoffs in 2023-2024, directly affecting trust, safety and security teams. The ADL has noted that this has weakened the industry's capacity to address harassment. There is no unified governing body for esports. Each organisation must develop its own child protection and GBV policies independently. While bodies like ESIC in the UK have published safeguarding guidelines, compliance remains voluntary.

Technology capable of automatically detecting harassment in voice chat is still in development. Only 55% of gaming companies currently have voice chat moderation tools, according to ADL's 2024 data.

From a legal perspective, academic commentary (Barker, 2024, ALTI Amsterdam Law & Technology Institute) reflects that despite more than 30 years of regulatory efforts, online violence against women gamers remains largely uncontrolled. Existing legal frameworks, enforcement mechanisms and industry self-regulation are structurally inadequate.

Trend 1 - Toxic Masculinity Is Being Reproduced Digitally

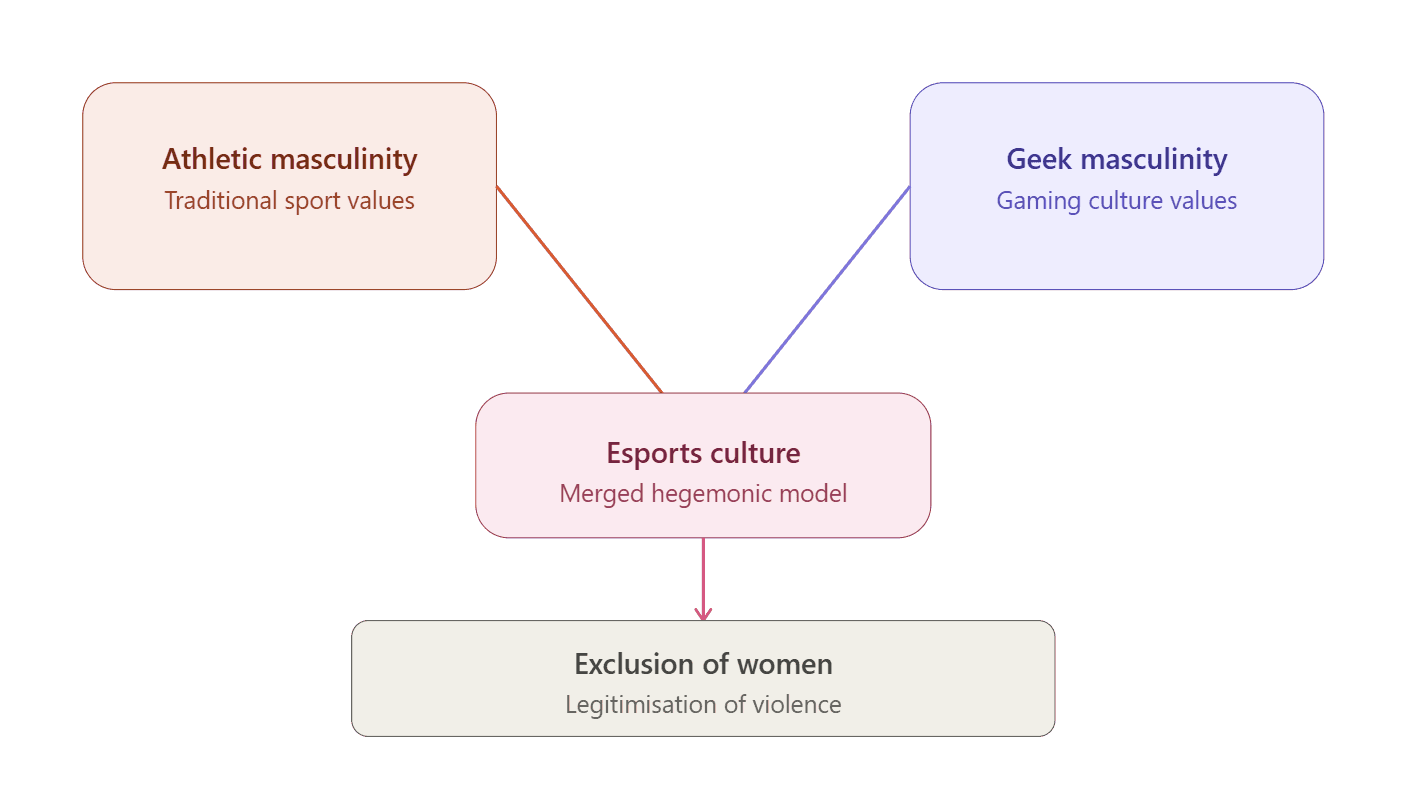

Esports environments create a unique space where traditional sport's hyper-masculine values merge with what researchers call "geek masculinity." This convergence produces a new form of hegemonic masculinity, a social order that excludes women, demeans non-conforming masculinities and legitimises violence.

Rogstad's 2021 systematic literature review, published in the European Journal of Sport and Society, identified how the combination of anonymity and low gender diversity in esports creates hostile environments for anyone who does not conform to dominant norms. Sexual harassment is functionalised by insecure men as a "masculinity performance", a way to prove their status within the gaming hierarchy.

Ethnographic observations at events like EVO 2019 have documented how women's bodies are marginalised in esports spaces through physical absence or digital objectification. The majority of women gamers report muting their microphones or hiding their gender to avoid harassment.

A 2025 publication in PMC (Miller-Idriss) takes this further, arguing that sexist insults and hate speech in gaming platforms function as an "incubator" channelling everyday sexism towards violent extremism. Gender-based insults serve as "virtual masculinity acts" that reinforce patriarchal norms. A 2025 systematic review published in Discover Psychology by Springer Nature analysed 19 empirical studies and identified four core themes in women's esports experience: (i) a toxic atmosphere of denigration, discrimination and sexual objectification, (ii) women's motivation and representation, (iii) coping strategies, and (iv) interventions. "Toxic geek masculinity" operates as a force that legitimises an exclusionary order dominated by men.

The implications extend beyond gaming. If esports culture normalises misogyny and links it to identity performance, it becomes not just a gender equality issue but a radicalisation concern.

Trend 2 - GBV Is Expanding into Virtual Spaces

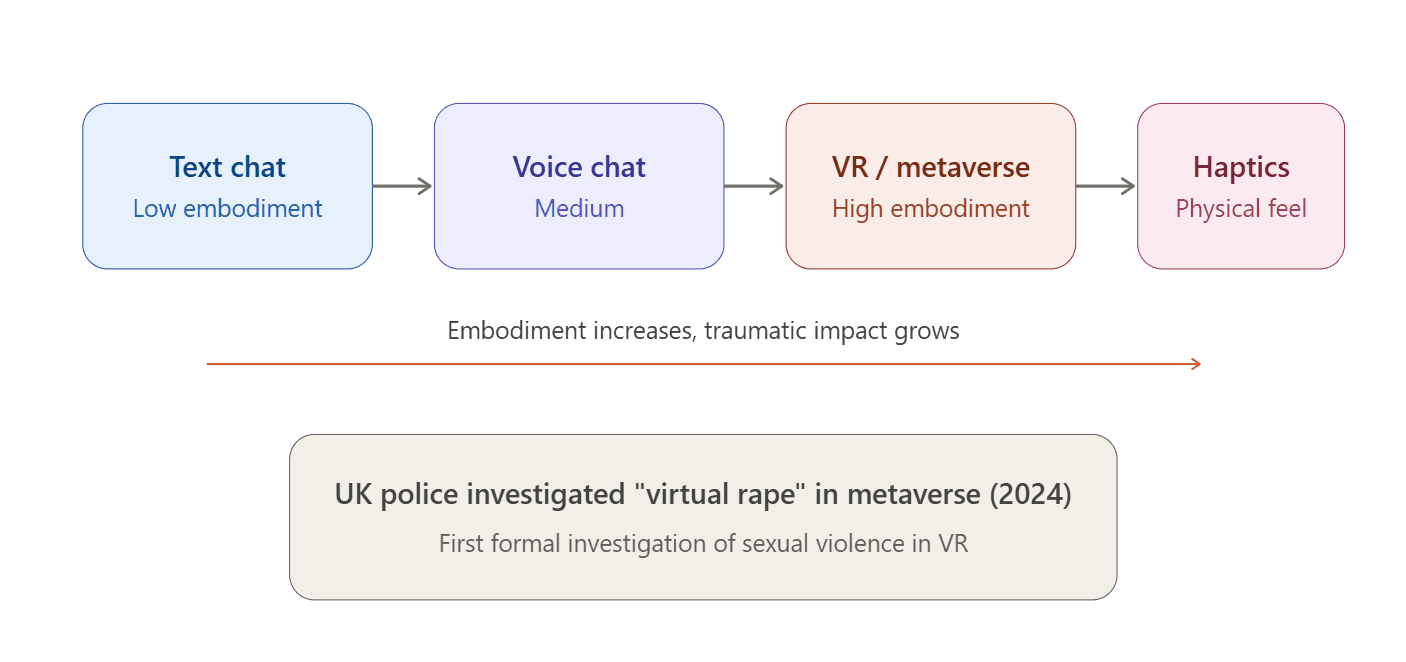

In VR and metaverse environments, the sense of "embodiment", the feeling of being physically present in a virtual space, dramatically amplifies the impact of GBV. What might feel like "just words" in a text chat becomes a visceral experience when it happens to your avatar in a 3D space.

In 2024, British police investigated a "virtual rape" case targeting a young girl in the metaverse, one of the first formal investigations to take sexual violence in VR seriously. Legal scholars McGlynn and Rackley (2025), writing in the Oxford Journal of Legal Studies, have developed the concept of "meta-rape" to position VR sexual violence within the continuum of physical sexual violence. They argue that existing criminal law may already apply to some forms of meta-assault.

Research from FAU/Wisconsin found that a significant proportion of US teens using VR experience hate speech, sexual harassment and grooming. In VR environments, five out of six female participants reported experiencing gender-based harassment from male-presenting avatars. As haptic technologies develop, gloves, suits and other devices that allow users to physically feel virtual interactions, the line between virtual harassment and physical abuse will continue to blur. Approximately 33% of US teenagers already own a VR device, yet platform safety features are rarely used.

The progression is clear: from text chat (low embodiment) to voice chat (medium) to VR/metaverse (high embodiment) to haptics (physical sensation). At each step, the traumatic impact of harassment intensifies. Yet regulatory and safeguarding frameworks have not kept pace with this technological evolution.

The Gap - Evidence-Based Programmes Haven't Gone Digital

This is perhaps the most important finding of the entire analysis.

Sport-based violence prevention initiatives were identified as having the strongest effect size in an umbrella review covering 16 meta-analyses (Fazel et al., 2024, published in Trauma, Violence & Abuse by Oxford University). Among all population-based approaches to violence prevention, including early childhood, youth development and programmes targeting male perpetrators of sexual assault, sport-based initiatives produced the most robust outcomes.

The evidence for specific programmes is compelling. Coaching Boys Into Men (CBIM), tested through a cluster randomised clinical trial of 973 male athletes published in JAMA Pediatrics (Miller et al., 2020), demonstrated significant increases in positive bystander intervention behaviours among athletes who participated in the programme, alongside reductions in relationship violence perpetration among those who had previously dated.

Football Onside, a bystander intervention programme tested in professional football settings (Kovalenko & Fenton, 2024, Journal of Interpersonal Violence), showed promising results in a quasi-experimental feasibility study with a 9-month follow-up. The study highlights sport as a unique platform for bystander approaches, while noting that the evidence base for bystander interventions in sport remains limited. International frameworks already recognise this potential. UNODC and the IOC jointly published a 2024 policy guide on preventing youth crime and violence through sports, built on the UNODC Line Up Live Up programme and the IOC Olympic Values Education Programme (OVEP), linked to SDG targets and the Olympism365 strategy.

Yet the critical gap remains: none of these programmes have been systematically adapted to digital gaming or esports. The "coach" figure in online gaming, whether a team captain, a streamer, or a tournament organiser, has not been engaged as a prevention agent. The transfer from physical to digital has simply not happened.

This represents both the biggest blind spot and the biggest opportunity identified in this analysis.

Wild Card 1 - What If AI Transforms Gaming Culture?

A wild card is a low-probability, high-impact event that could disrupt the entire landscape overnight.

AI technology capable of automatically detecting harassment in voice chat is under development. Current voice moderation tools exist in only 55% of gaming companies, and most rely on text-based filtering that misses the vast majority of abuse delivered verbally.

If real-time AI voice moderation matures to a reliable standard, it could fundamentally change harassment dynamics in gaming. Imagine a system that detects hate speech, sexual harassment and bullying in voice chat as it happens, flagging, muting or escalating in real time.

However, the tensions are real. Privacy concerns around continuous voice monitoring, false positive rates that could silence legitimate expression, and censorship fears from the gaming community will be serious points of debate. The technology is 3-5 years from maturity, and its impact, if realised, would be transformative.

Wild Card 2 - What If Esports Has Its #MeToo Moment?

A major sexual harassment or child abuse scandal going public in esports could bring the sector face to face with regulatory pressure overnight, much like the #MeToo movement transformed Hollywood and corporate culture.

The probability is high, within a 1-3 year window. The ingredients are already present: widespread harassment, inadequate safeguarding, millions of young participants, and a sector that has grown faster than its governance structures. If it happens, the impact would be disruptive. Sponsors, broadcasters and platform holders would face immediate pressure to act. Regulatory bodies would fast-track gaming-specific provisions. The sector would be forced to choose between reactive damage control and proactive structural reform.

The key tension is between reactive and proactive responses. Organisations that have already invested in prevention, safeguarding and training will be positioned to lead. Those that have not will face an existential challenge.

This is both a risk and an opportunity window, and the analysis suggests the window is narrowing.

So What Now?

These signals and trends make one thing very clear: GBV prevention in digital sport environments is no longer "a topic for the future", it is an area requiring urgent action now.

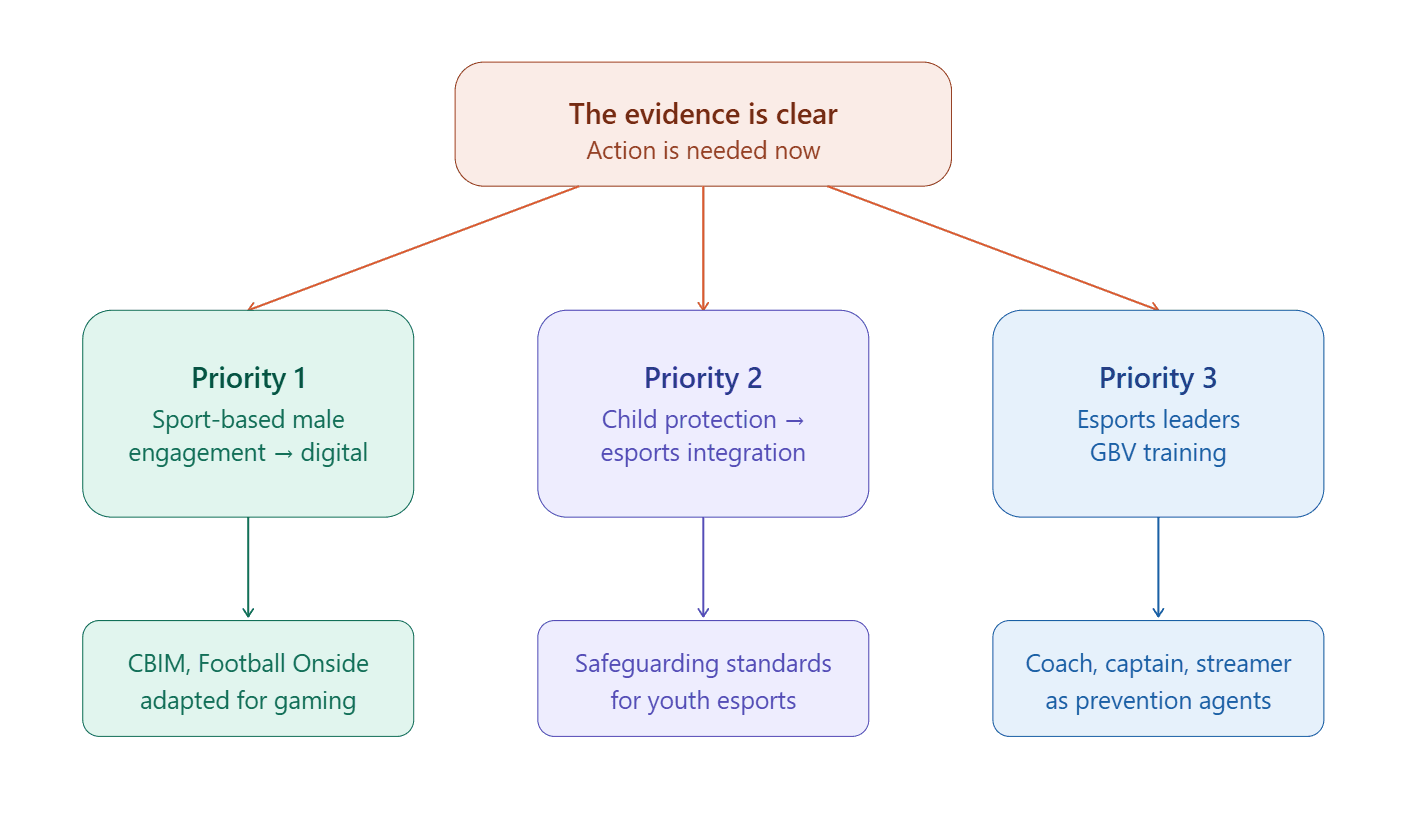

Three priority areas emerge from the analysis:

Priority 1 - Sport-based male engagement programmes need to go digital

Proven approaches like Coaching Boys Into Men and Football Onside should be adapted for online gaming and esports environments. The "coach" role, whether embodied by a team captain, a community moderator or a streamer, offers a natural entry point for prevention messaging. The evidence base from physical sport is strong; the adaptation to digital contexts is the missing step. This aligns with Erasmus+ Sport KA2 funding priorities around innovation and digital transformation.

Priority 2 - Child protection mechanisms must be integrated into esports

Safeguarding standards that exist in physical sport, background checks, designated protection officers, codes of conduct, systematic reporting procedures, are largely absent from the esports sector. With 1.5 billion children playing online and criminal organisations actively exploiting gaming platforms, this gap is both urgent and high-impact. The EU Digital Services Act (Article 28), BIK+ strategy and the EU Child Rights Strategy all provide strong policy foundations.

Priority 3 - Esports leaders need GBV prevention training

Coaches, team captains, tournament organisers and content creators operate at the frontline of gaming culture. They set norms, model behaviour and shape community expectations. Equipping these leaders with the knowledge and tools to recognise, prevent and respond to gender-based violence would create a distributed network of prevention agents across the esports ecosystem. This aligns with the EU Sport Work Plan and the High-Level Group on Gender Equality in Sport.

EU policy alignment

Our analysis found strong alignment between these findings and existing EU policy frameworks. The strongest connections run through Erasmus+ Sport KA2, the EU Directive 2024/1385 (on combating violence against women and domestic violence), and the DSA's child protection provisions. The project demonstrates a rare degree of multi-policy fit within the EU architecture, making this an area where funding, evidence and political will could converge.

This article is a part of the SF4Sport Strategic Foresight Series by Sport Singularity, produced in partnership with Association for Justice and Rights in Sports (SAHADAYIZ). The analysis draws on 22 peer-reviewed sources (2020–2026) and maps EU policy frameworks including the DSA, Erasmus+ Sport, BIK+ and Directive 2024/1385.

March 2026, Sport Singularity, SF4Sport

References

Academic and Peer-Reviewed Research

Barker, K. (2024). Online Violence Against Women Gamers — A Reflection on 30+ Years of Regulatory Failures. ALTI Amsterdam Law & Technology Institute.

Crothers, H., Scott-Brown, K. C., & Cunningham, S. J. (2024). 'It's just not safe': Gender-based harassment and toxicity experiences of women in esports. Games and Culture. https://doi.org/10.1177/15554120241273358

Fazel, S., Burghart, M., Wolf, A., Whiting, D., & Yu, R. (2024). Effectiveness of violence prevention interventions: Umbrella review of research in the general population. Trauma, Violence, & Abuse, 25(2), 1709 to 1718. https://doi.org/10.1177/15248380231195880

García-Toledano, B., et al. (2025). Preventing harassment and gender-based violence in online videogames through education. Social Sciences, 14(5), 297. https://doi.org/10.3390/socsci14050297

Hartill, M., Rulofs, B., Allroggen, M., Demarbaix, S., Diketmüller, R., Lang, M., et al. (2023). Prevalence of interpersonal violence against children in sport in six European countries. Child Abuse & Neglect, 146, 106513. https://doi.org/10.1016/j.chiabu.2023.106513

Hinduja, S., & Patchin, J. W. (2024). Metaverse risks and harms among US youth: Experiences, gender differences, and prevention and response measures. New Media & Society. https://doi.org/10.1177/14614448241284413

Kovalenko, A. G., & Fenton, R. A. (2024). Bystander intervention in football and sports. Journal of Interpersonal Violence. https://doi.org/10.1177/08862605241239452

Kowert, R., et al. (2024). Toxicity and harassment study. [432 participant study].

McGlynn, C., & Rackley, E. (2025). From virtual rape to meta-rape: Sexual violence, criminal law and the metaverse. Oxford Journal of Legal Studies. https://doi.org/10.1093/ojls/gqaf009

Miller, E., et al. (2020). An athletic coach-delivered middle school gender violence prevention program: A cluster randomized clinical trial. JAMA Pediatrics, 174(2), 143 to 151. https://doi.org/10.1001/jamapediatrics.2019.4475

Miller-Idriss, C. (2025). Misogyny incubators: How gaming helps channel everyday sexism into violent extremism. PMC.

Rogstad, E. T. (2021). Gender in eSports research: A literature review. European Journal of Sport and Society, 18(3), 271 to 293. https://doi.org/10.1080/16138171.2021.1930941

Santisteban, M., et al. (2025). GamerVictim Project. Universidad Miguel Hernández / Crimina Center. [EurekAlert press release, February 2025].

Springer Nature. (2025). A systematic review of the current state and challenges to the representation of women in esports. Discover Psychology. https://doi.org/10.1007/s44202-025-00387-8

Taylor, T. L. (2012). Raising the Stakes: E-Sports and the Professionalization of Computer Gaming. MIT Press.

Wells, G., Romhányi, Á., & Steinkuehler, C. (2025). Hate speech and hate-based harassment in online games. Frontiers in Psychology, 15, 1422422. https://doi.org/10.3389/fpsyg.2024.1422422

Institutional and Industry Research

Anti-Defamation League. (2024). Hate is No Game: Hate and Harassment in Online Games 2023. ADL Center for Technology and Society.

Anti-Defamation League. (2025). Playing with Hate: How Online Gamers with Diverse Identity Usernames are Treated. ADL Center for Technology and Society.

NSPCC. (2024). Online gaming safety data. National Society for the Prevention of Cruelty to Children.

Scientific American. (2025). Universities Need to Address Sexual Harassment in the Gaming They Sponsor.

Ygam & Mumsnet. (2025). Children's gaming habits report.

EU Policy Frameworks and Legislation

Directive 2024/1385 of the European Parliament and of the Council on combating violence against women and domestic violence.

Regulation (EU) 2022/2065 — Digital Services Act (DSA). Full application from February 2024.

European Commission. Better Internet for Kids (BIK+) Strategy.

European Commission. EU Strategy on the Rights of the Child (2021).

European Commission. EU Gender Equality Strategy 2020 to 2025.

Council of the European Union. EU Work Plan for Sport.

European Commission. Erasmus+ Programme, Sport Chapter, Key Action 2.

European Commission. Digital Education Action Plan 2021 to 2027.

Regulation (EU) 2024/1689 — EU Artificial Intelligence Act.

European Commission. High-Level Group on Gender Equality in Sport.

EIGE. (2025). Input to the 2026 to 2030 Gender Equality Strategy. European Institute for Gender Equality.

Eurostat, FRA, & EIGE. (2024). EU Gender-Based Violence Survey — Key Results: Experiences of Women in the EU-27. Publications Office of the European Union.

International Frameworks and Standards

UNODC & IOC. (2024). Preventing Youth Crime and Violence Through Sports: A Policy Guide. SC:ORE Programme.

UNICEF Innocenti. (2025). Protecting Children in Online Gaming: Mitigating Risks from Organized Violence. UNICEF Office of Research — Innocenti. Working Paper.

International Olympic Committee. Olympism365 Strategy.

United Nations. Sustainable Development Goals (SDGs), in particular SDG 5 (Gender Equality), SDG 16 (Peace, Justice and Strong Institutions).

Futures Without Violence & Office on Violence Against Women (OVW). Engaging Men to End Violence Centre.

UN Women. Spotlight Initiative — Sport for Prevention campaigns (Nigeria, Argentina).

ESIC. (2024). Safeguarding guidelines for esports. Esports Integrity Commission, United Kingdom.